Deepfake Deception: How AI-Powered Fraud is Targeting Corporate Leaders

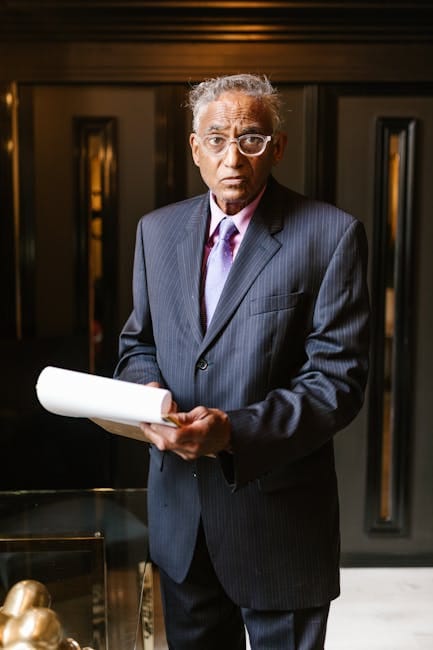

A sophisticated deepfake video showing the chief executive of the Bombay Stock Exchange giving investment advice circulated on social media at the start of this year, marking another escalation in AI-powered corporate fraud. The fabricated clip, featuring Sundararaman Ramamurthy apparently recommending specific stocks, highlighted a growing threat that has seen deepfake utilisation increase by nearly 3,000% over the past two years.

"It was in the public domain where many people could see it, and get cheated into buying or selling stocks, as if I'd recommended them," Ramamurthy explained, noting the exchange immediately filed complaints to have the video removed and issued market warnings. The incident underscores how rapidly advancing artificial intelligence is creating new vulnerabilities for corporations and their leadership.

Background and Context

The proliferation of deepfake technology represents a paradigm shift in corporate security threats. "The latest data shows that over the past two years or so, we've seen an increase of almost 3,000% in the number of deepfakes being utilized," says Karim Toubba, chief executive of US-based password security company LastPass. This explosive growth has been fueled by both increasing accessibility and decreasing costs of AI tools capable of generating convincing synthetic media.

"Deepfakes are becoming very, very easy to do," notes Matt Lovell, co-founder and CEO of UK-based cyber-security company CloudGuard. "To generate video and audio quality of extremely accurate specifications - it takes minutes." The technical barrier that once limited deepfake creation to sophisticated state actors has effectively vanished, opening the field to criminal enterprises and opportunistic fraudsters.

Key Figures and Entities

The Bombay Stock Exchange incident represents just one facet of a broader pattern of deepfake attacks targeting corporate leadership. Toubba himself was targeted in 2024 when an employee received what appeared to be an audio message from him requesting urgent assistance. The employee's suspicion—sparked by the message arriving via WhatsApp on a personal device rather than company-sanctioned channels—prevented a potential security breach.

British engineering firm Arup was less fortunate. According to Hong Kong police, an employee in their Hong Kong office received a message from someone claiming to be the firm's London-based chief financial officer regarding a "confidential transaction." The employee subsequently participated in a video call with multiple supposed colleagues, all of whom were deepfake representations. Following this call, $25 million (£18.5 million) was transferred to five different bank accounts before the deception was discovered.

Legal and Financial Mechanisms

The financial mechanics of deepfake fraud exploit traditional corporate processes while leveraging new technological capabilities. "For, say, a simple, single individual-led attack, you're looking at $500 to $1,000 with the use of largely free tools," explains Lovell. "For a more sophisticated attack, you're looking at between $5,000 and $10,000." This relatively low cost of entry enables fraudsters to cast wide nets and attempt multiple approaches until finding vulnerable targets.

Response mechanisms are emerging alongside the threat. Companies can now deploy verification software that analyses facial expressions, head movements, and even subtle blood flow patterns in the face to determine whether a subject is real or AI-generated. "In your cheeks or just underneath your eyelids, we'll be looking for changes in blood flow when a person is talking or presenting," Lovell explains. "That's really where we can tease out whether it's AI-generated or it's real."

International Implications and Policy Response

The global nature of deepfake threats presents significant challenges for regulatory frameworks and law enforcement coordination. "It's a race, between who can deploy a technology and who can thwart that technology as quickly as possible," says Toubba. This technological arms race is unfolding across jurisdictions with varying levels of preparedness and regulatory frameworks.

The broader implications extend beyond immediate financial losses to fundamental questions of trust in digital communications. "You would never want to simply jump on a video call with someone and transfer $25 million," observes Stephanie Hare, a tech researcher and co-presenter of the BBC's AI Decoded TV programme. "Companies are having to take extra steps to secure these types of communications. That's the brave new world we're in now."

Experts warn that response capabilities may not be keeping pace with the threat. "Attack vectors are accelerating faster than we can accelerate defence automation and protection," Lovell cautions. "Are people moving fast enough to respond to the speed the threat is developing? Absolutely not." This gap is exacerbated by a global shortage of cybersecurity professionals capable of defending against increasingly sophisticated attacks.

Sources

This report draws on statements from corporate executives including Sundararaman Ramamurthy of the Bombay Stock Exchange, Karim Toubba of LastPass, and Matt Lovell of CloudGuard, as well as official records from Hong Kong police regarding the Arup incident and expert commentary from technology researcher Stephanie Hare. Information on deepfake proliferation trends and costs was provided through direct interviews with cybersecurity industry leaders.