Deepfake AI Tool Marketed on Darknet Threatens Global KYC Systems

The rapid advancement of artificial intelligence has introduced sophisticated new threats to the global financial infrastructure. Recent reports indicate that a darknet actor is actively marketing a tool capable of bypassing Know Your Customer (KYC) protocols through the use of deepfake technology and voice manipulation. This development poses a significant challenge to banks and cryptocurrency platforms that rely on automated identity verification to prevent financial crime.

According to a recent alert posted by CoinMarketCap, the software utilizes generative AI to mimic human appearance and speech with high precision. By creating synthetic identities that can pass standard security checks, this technology marks a pivotal shift in digital fraud tactics, moving beyond the theft of real identities to the manufacturing of entirely new ones.

Background and Context

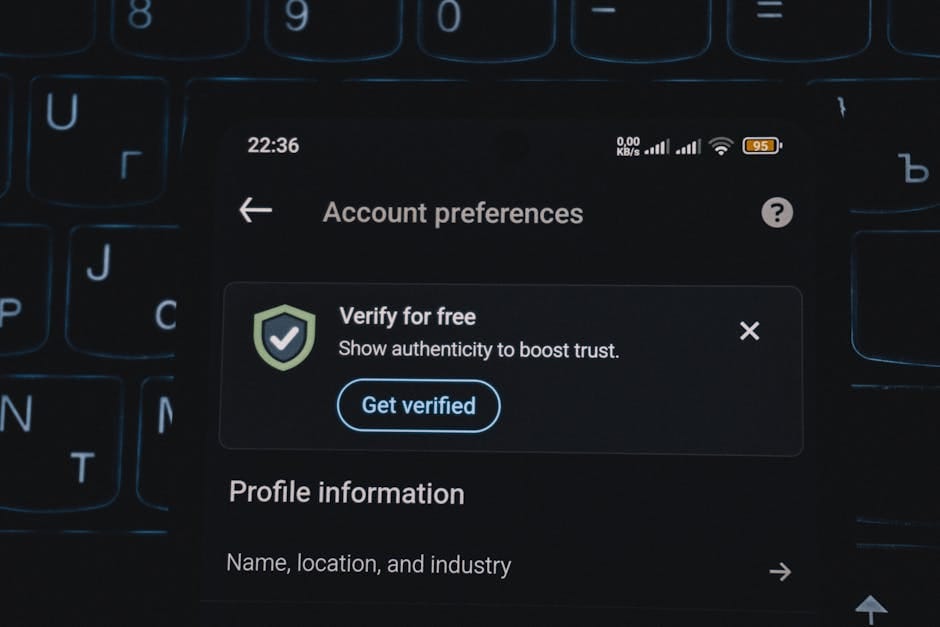

The financial sector has increasingly adopted digital onboarding processes that depend on biometric data to verify user identity. These systems typically employ a combination of document verification, facial recognition, and liveness detection—checks designed to ensure the person is present and real. However, as defensive technologies have matured, so too have the offensive capabilities of cybercriminals.

Industry analysts warn that the accessibility of deepfake tools has lowered the barrier to entry for large-scale fraud. Where attackers once needed physical access to stolen documents, they can now generate convincing forgeries and matching synthetic faces on demand. This evolution places immense pressure on fintech companies and traditional banks to reassess the resilience of their current security frameworks.

Key Figures and Entities

While the specific darknet actor remains anonymous, their actions have drawn scrutiny from across the cryptocurrency and banking sectors. The software in question is designed to target the onboarding processes of major financial institutions and crypto exchanges, exploiting the very systems meant to deter money laundering and terrorist financing.

The public disclosure of this tool came via social media, where CoinMarketCap highlighted the threat on April 7, 2026. The entity behind the tool claims it can defeat liveness detection mechanisms that require blinking or head movement, thereby allowing synthetic identities to authenticate successfully as if they were real humans.

Legal and Financial Mechanisms

At the core of this threat is the ability to circumvent three fundamental layers of modern KYC systems. The AI tool generates synthetic identity documents that appear legitimate to automated scanners. Simultaneously, it produces deepfake imagery that simulates natural facial movements, satisfying liveness detection requirements that static photos cannot.

Perhaps most concerning is the integration of voice cloning capabilities. The software reportedly requires only a small audio sample to replicate a user's voice, enabling real-time interaction during verification calls. By combining visual and auditory mimicry, the tool creates a holistic illusion of identity that standard pattern recognition software struggles to flag as fraudulent.

International Implications and Policy Response

The emergence of such tools suggests that static, one-time verification methods may soon be obsolete. The scalability of the technology—allowing a single actor to generate multiple fake identities in minutes—presents a systemic risk to the integrity of digital finance. Consequently, regulatory bodies and financial institutions are being forced to consider more robust, multi-layered defense strategies.

Experts suggest that the future of digital verification lies in behavioral analysis and continuous monitoring rather than point-in-time checks. By tracking user behavior post-onboarding, systems may identify anomalies that indicate the presence of a bot or synthetic identity. However, this creates a technological arms race, as defensive measures must constantly adapt to keep pace with rapidly evolving generative AI models.

Sources

This report draws on social media reporting by CoinMarketCap and industry analysis regarding AI-driven identity fraud and biometric security vulnerabilities.